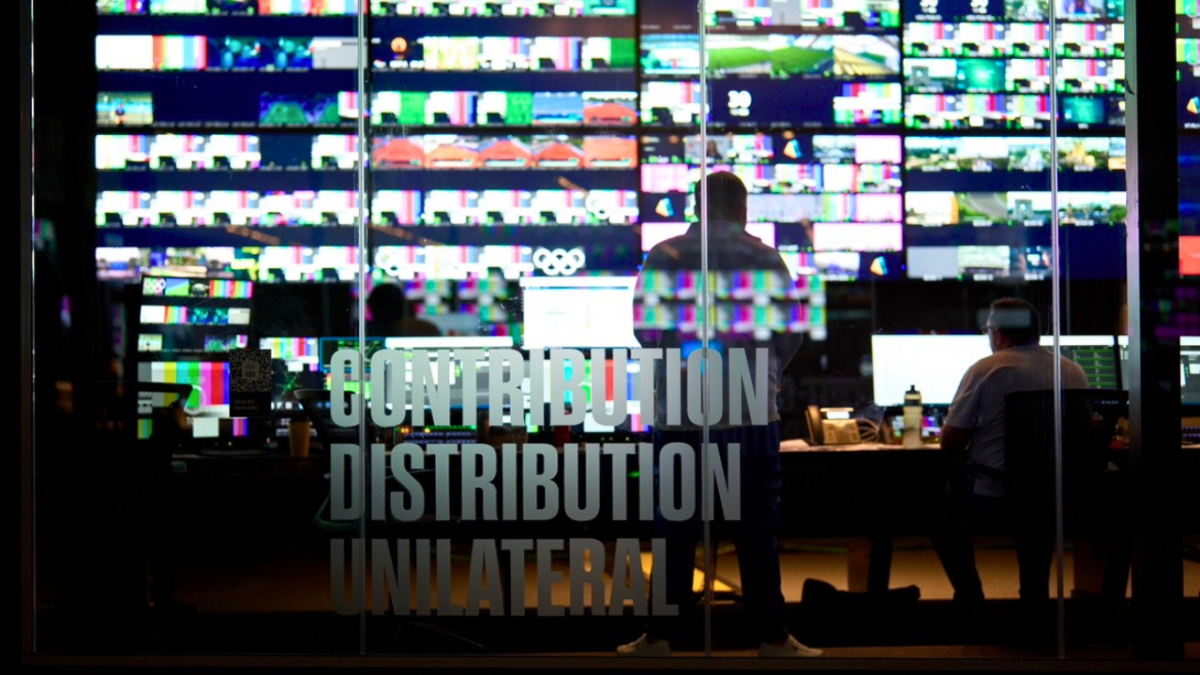

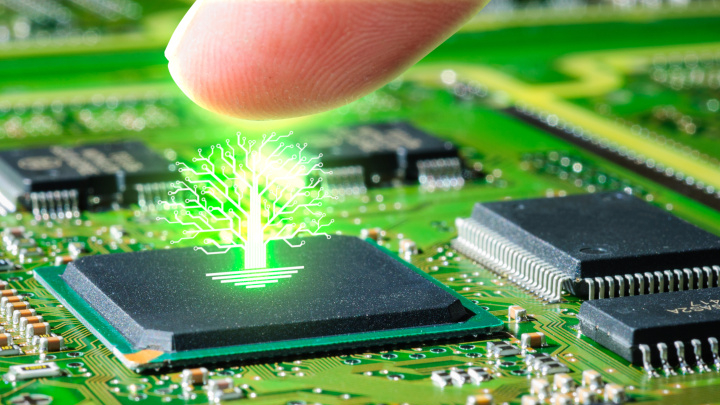

M6 uses 80% less energy than other trillion-level language models and is 11 times more energy-efficient. Photo credit: Shutterstock

Artificial intelligence (AI) has the power to change the world, so long as it doesn’t burn the planet down in the process.

Energy-hungry AI models are on a collision course with governments and corporates that have made sustainability pledges.

AI models of all shapes and sizes — from email spam filters to self-driving cars — learn through exposure to massive quantities of data, presented repeatedly following rounds of adjustments to improve their accuracy.

A decade ago, algorithms were trained on a personal computer. Today, it takes considerable specialty computing hardware. The energy burnt in the process, especially for AI language models, has ballooned in lockstep with technical advances in the field.

According to research lab Open AI, the computing power used in deep learning grew 300,000-fold between 2012 and 2018.

To be sure, the size of these models is also growing and can now include trillions of parameters, which are configuration variables that are modified as the model learns. But is it worth it?

The amount of carbon emissions generated by training one large AI model equals 315 people — or an entire 747 aircraft — traveling roundtrip between San Francisco and New York City, scientists at the University of Massachusetts Amherst calculated.

As climate change looms, this practice is not sustainable.

Enter Alibaba Group’s DAMO Academy. Last year, the research institute launched M6, the world’s first 10-trillion-parameter pre-training model, designed for multimodality and multitasking.

Despite its size, M6, or the Multi-Modality to Multi-Modality Multitask Mega-transformer, uses 80% less energy than other trillion-level language models and is 11 times more energy-efficient.

Alizila caught up with DAMO’s AI scientist Dr. Yang Hongxia to understand the importance of this energy-efficient AI, its applications, and the future of green AI models.

The following transcript has been edited for clarity and brevity

Q. What’s behind the race to build big AI models?

A. Compared with traditional models, big AI models have more “neurons” for processing, much like the human brain. They are thus more intelligent and have greater computer processing power.

But big AI models are very energy-intensive. Google’s landmark AI projects require at least thousands of Tensor Processing Unit (TPU), which are powerful processors that can speed up processing of tasks and can be used for training artificial intelligence.

GPT-3, a powerful language model by OpenAI, is estimated to have consumed the same amount of energy in training as a car driving to the moon and back. That would be too damaging to the environment.

Large, power-hungry AI models are not sustainable in the long run because only giant tech companies can afford to use thousands of TPUs to run over 100-billion-parameter AI models, meaning AI would be accessible only to very few.

Q. What is unique about the M6 model?

A. Before M6, most of the large language AI models were trained in English and based on learning involving a single modality. For example, Open AI’s GPT-3 is primarily a language model trained to predict the next words based on natural-language processing.

M6 is the first model trained in Chinese with multimodality understanding, including various data types such as image, text, video, audio and numerical data. M6 is like a super brain, if you feed it with domain specific data, it quickly becomes the expert in that domain.

M6 is also highly energy efficient. M6 is 50 times GPT-3’s size, but its energy consumption is only 1% of what GPT-3 needed for training. This is very important for reducing the model’s carbon footprint and increasing the accessibility of AI.

Q. Why did DAMO Academy invent M6?

A. In the beginning, because we faced resource constraints. Google and Facebook have plenty of TPU or graphics processing unit (GPU) resources to deploy, but most Chinese tech companies have relatively limited resources.

Assuming energy consumption levels are unchanged, 128 GPUs would suffice for training for a ten-billion-parameter AI model. But for a trillion-level-parameter AI model, training would require 12,800 GPUs, far exceeding the capacity of most tech companies in China.

That’s when we realized that to scale, we’d have to deal with AI models’ energy consumption problem.

Q. How will M6 be used?

A. Let me give you an example. M6 has been used to power searches and recommendations on [marketplace] Taobao, especially for text and image pairing.

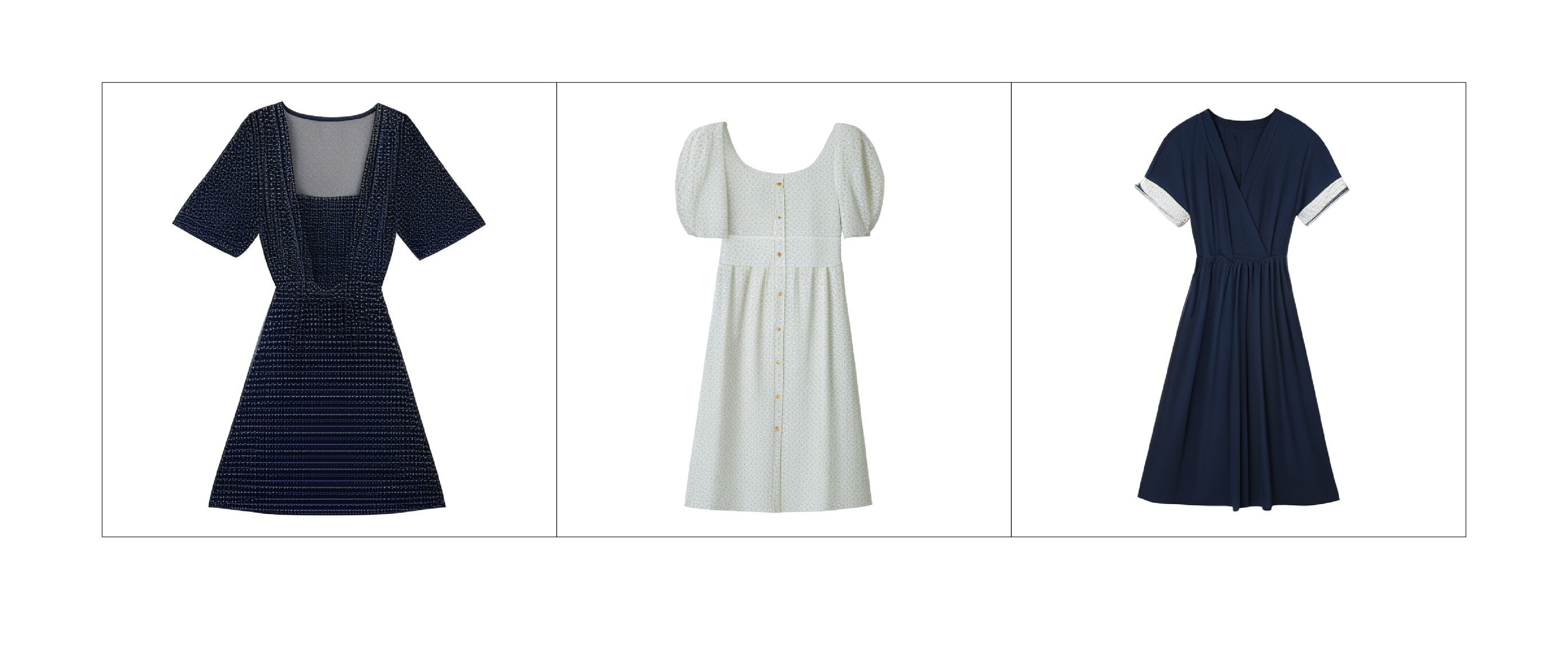

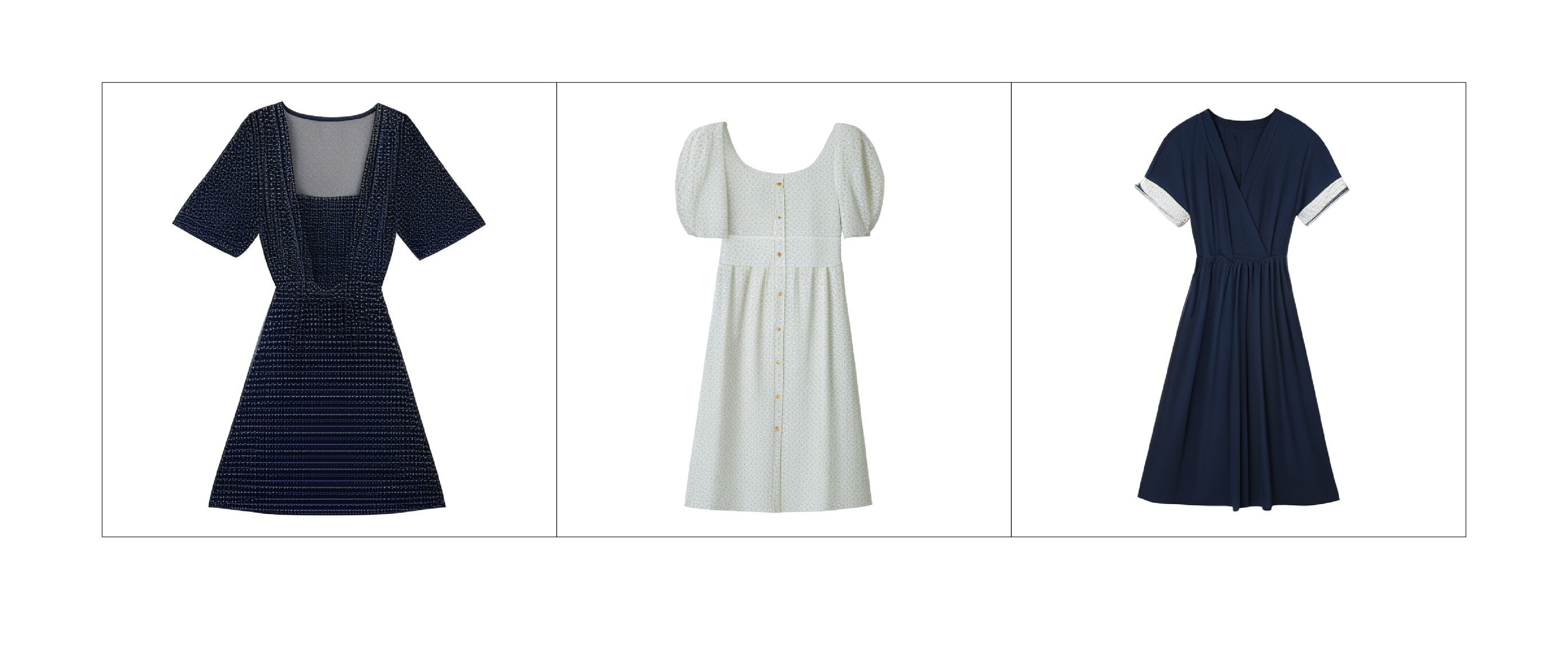

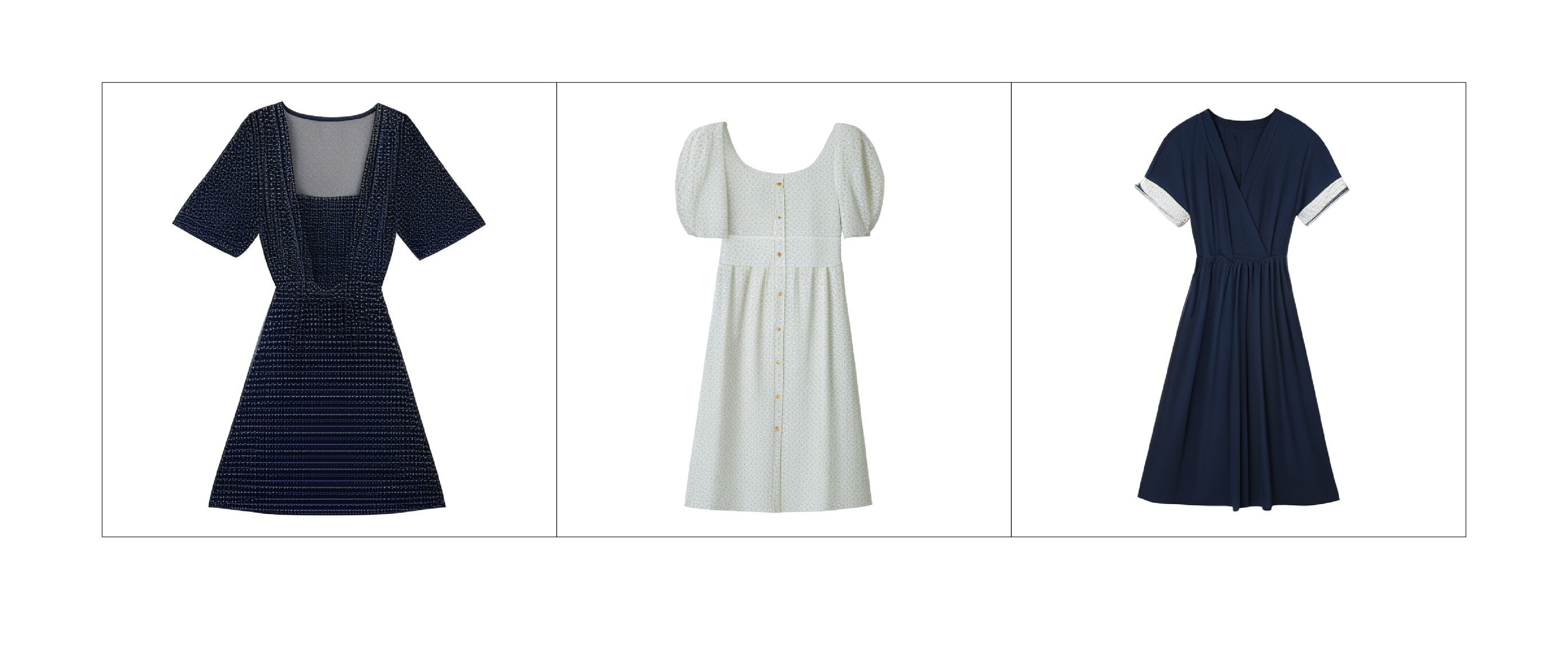

Millennials and Generation Z demand a personalized shopping experience. For example, they may want to search for a rugged mug but very few merchants write “rugged” in the product title. So they need an algorithm that understands both the text and the image to find the perfect text-to-image pairing.

Only M6, with cross-modality and multimodality, can understand the meaning of texts and images together in context.

Another application of M6 is in design and manufacturing. M6 can help designers generate sketches quickly through image synthesis. Designers can work on the sketches that M6 creates and shorten the production cycle from months to weeks.

Designs powered by M6, combined with more eco-friendly materials, could reduce the carbon footprint of T-shirt manufacturing by more than 30%.

Q. Why does DAMO Academy think we’ll see more co-evolution of small and large AI models?

A. Right now, training for large AI models is in the cloud. But edge computing is the future. From mobile phones to VR/AR glasses to self-driving vehicles, edge devices will process the data they collect and share insights near where the data is generated.

Because of data privacy concerns, users would prefer to have smaller AI models maintained on the edge instead of large AI models trained in the cloud. Edge computing would pave the way for true personalization of AI because everyone’s AI model would be diversified.

For active users, their AI models will be updated regularly. For those who are not, their models would stay more stable. Small AI models on the edge would regularly be synchronized with the large AI models on the cloud to achieve co-evolution.